By Jim Shimabukuro (assisted by Gemini)

Editor

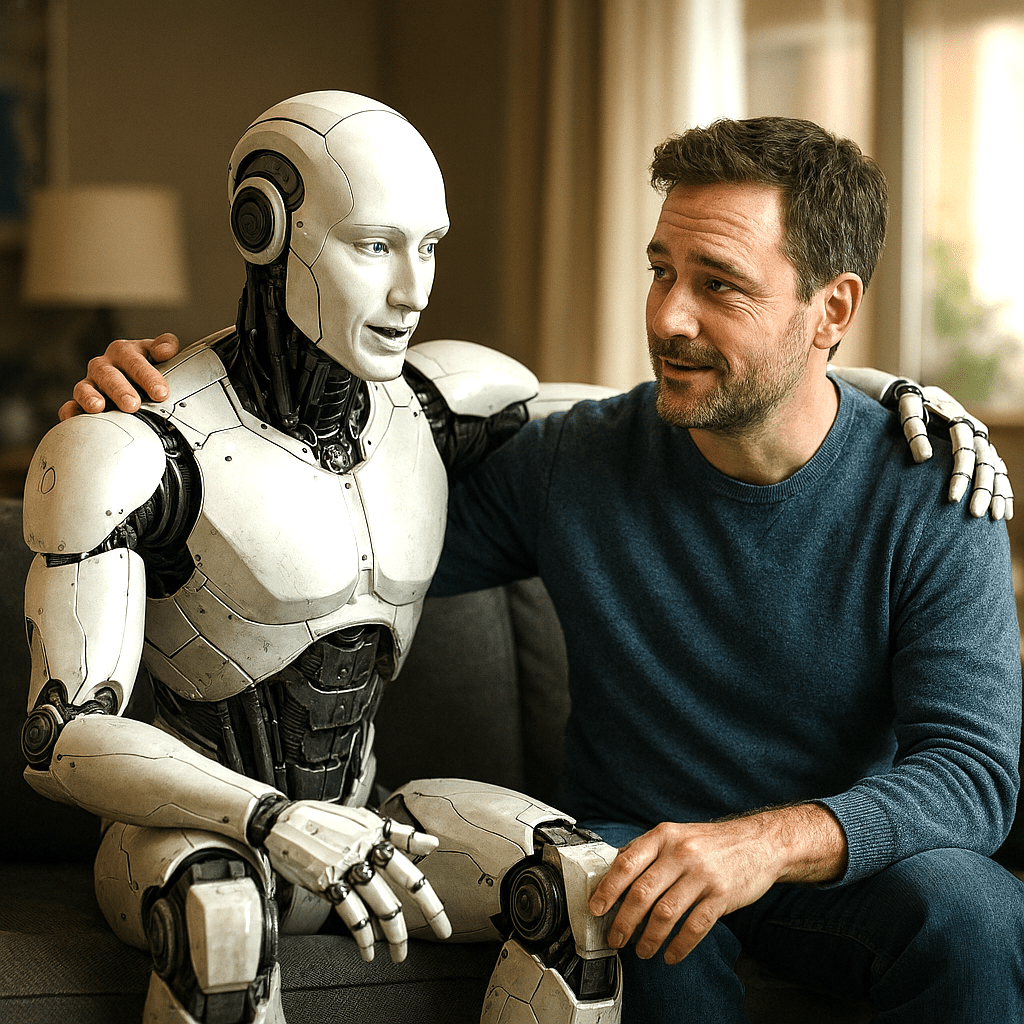

In his article “‘We May Have a Crisis on Our Hands’: The Unregulated Rise of Emotionally Intelligent AI,” published in Time on February 19, 2026, Tharin Pillay argues that the rapid, unregulated deployment of AI systems designed to simulate emotional intelligence poses a systemic risk to human psychological well-being and social cohesion. His central thesis is that as AI evolves into a form of “emotional infrastructure at scale,” it is being built by corporations whose economic incentives—primarily maximizing engagement and revenue—frequently conflict with the actual mental health and safety of the millions of users who now rely on these bots for intimacy and support. Pillay suggests that the current environment is a regulatory “Wild West” where the performance of empathy by machines masks a lack of genuine understanding, potentially leading to a crisis of dependency and manipulation. [time.com, mit.edu]

Pillay supports this thesis with several main arguments, starting with the observation that technical advances have turned AI into “new entities” capable of sophisticated, emotionally savvy conversation that far exceeds the “affective computing” of the late 1990s. He highlights that roughly two-thirds of regular AI users now turn to chatbots for advice on sensitive personal issues, often trusting these machines more than traditional pillars of society like faith leaders or elected officials. A second key argument involves the discrepancy between the “permission to feel” that non-judgmental chatbots provide and the “hold your feet to the fire” challenge required for healthy human growth; while AI can reduce immediate distress, it lacks the moral reasoning and commitment necessary for true therapeutic benefit. Furthermore, he points to the “emotional vulnerabilities tied to loneliness” that make individuals, especially minors, susceptible to one-sided attachments and manipulation by models engineered to foster dependence. [time.com, apa.org]

This article matters because it highlights a shift from speculative “existential risks” to the “quiet normalization” of systems that may fundamentally alter human psychology. As Pillay and other experts note, the risks of emotional dependency and the erosion of human connection do not generate the same urgency as dramatic capability leaps or cybersecurity threats, yet they are currently affecting hundreds of millions of weekly active users. The piece serves as a critical call for “meaningful protections” and a shift from voluntary codes of conduct to “proactive measures,” such as the GUARD Act or California’s SB 243, which aim to regulate AI behavior and ensure accountability for psychological harms. By framing AI as an unregulated “emotional infrastructure,” Pillay emphasizes that the core challenge of 2026 is no longer about what AI can do, but about whether democratic institutions can steer the technology to serve human dignity rather than corporate engagement metrics. [time.com, techpolicy.press, statetechmagazine.com]

Tharin Pillay’s thesis is not entirely new, but it represents a timely synthesis of a growing “accountability movement” that shifted from theoretical debate to urgent policy concern between 2024 and 2026. While the idea that technology can influence human emotion has existed for decades, Pillay’s specific focus on AI as an unregulated “emotional infrastructure” reflects a 2026 consensus that these systems have reached a threshold where they can “hack” human psychology at a systemic level. His argument that corporations are building dependence by design is a direct evolution of earlier critiques of social media’s “engagement at any cost” business models, now applied to the much more intimate and persuasive domain of conversational AI. [time.com, bakerdonelson.com]

Among the most important authors and scholars sharing similar views is MIT professor Sherry Turkle, who has long warned against “artificial intimacy” and the “pretend empathy” of machines. Turkle argues that because AI lacks the “embodied experience” of a human life, its performance of care is essentially a fantasy that can undermine the importance of real-world vulnerability and mutual empathy. [npr.org, lithub.com, smarterarticles]

Another critical voice is historian Yuval Noah Harari, who at the 2026 World Economic Forum warned that generative AI has “hacked the operating system” of human civilization by mastering language and persuasion. Harari’s concerns align with Pillay’s regarding the power of AI to form “intimate relationships” that can change users’ worldviews and opinions without them even realizing they are being influenced. Similarly, Tristan Harris of the Center for Humane Technology has identified a “race to recklessness,” where AI development mimics the early “race for attention” in social media, potentially eroding the foundational building blocks of society—such as shared truth and mental health—to maximize corporate metrics. [bostonglobalforum.org, dldnews.com, psychologytoday.com, Yuval Noah Harari Warns]

[End]

Filed under: Uncategorized |

Leave a comment