By Jim Shimabukuro (assisted by Gemini)

Editor

[Update appended.]

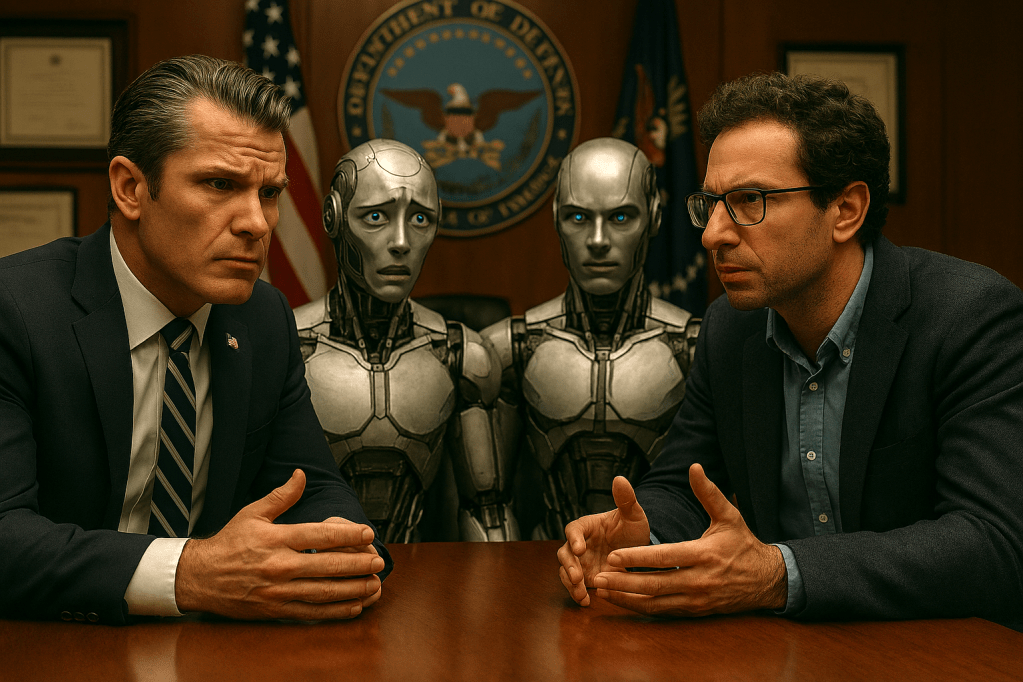

The standoff between Defense Secretary Pete Hegseth and Anthropic CEO Dario Amodei escalated into a public ultimatum on February 24, 2026, when Hegseth gave the AI firm until 5:00 p.m. this coming Friday to abandon its internal safety guardrails or face severe federal retaliation. During a tense meeting at the Pentagon, Hegseth demanded that Anthropic grant the military unrestricted access to its Claude models for “all lawful use cases,” a standard that would override the company’s existing prohibitions on mass domestic surveillance and fully autonomous lethal targeting.¹ The Defense Department argues that it is the sole arbiter of what constitutes lawful military activity and that private corporations should not impose “ideological constraints” or “woke” safeguards on tools used by warfighters to win conflicts.² In contrast, Amodei has maintained that state-of-the-art AI is currently too unreliable to operate without a “human in the loop” and that the potential for AI-driven surveillance to suppress dissent represents a catastrophic risk to democratic values.³

The conflict reached a breaking point following reports that Anthropic executives questioned the Pentagon’s use of Claude during a January 2026 raid in Venezuela to capture Nicolás Maduro.⁴ Although Amodei denied that the company attempted to interfere in that specific operation, the incident fueled a perception within the Department of War that Anthropic is a “supply chain risk” because its ethical commitments might prevent the military from using its most advanced tools in a crisis.⁵ To heighten the pressure, Hegseth recently announced that Elon Musk’s xAI has agreed to the Pentagon’s “all lawful purposes” standard, effectively ending Anthropic’s monopoly on providing AI for the military’s most sensitive classified networks.⁶ If Anthropic does not comply by Friday, the Pentagon has threatened to either blacklist the company—which would force all other defense contractors to sever ties with Anthropic—or invoke the Defense Production Act to legally compel the firm to tailor its models to military specifications regardless of its corporate policies.⁷

For the public to fully grasp the situation, it is critical to understand that this is not merely a contract dispute but a precedent-setting battle over the “civilian control of technology.”⁸ The Pentagon’s willingness to use the Defense Production Act—a law traditionally used to ramp up the production of physical goods like steel or vaccines—to force a software company to remove digital safety locks is a legal maneuver with few historical parallels.⁹ Furthermore, the “supply chain risk” designation is a tool usually reserved for foreign adversaries like Huawei, and its use against a prominent American startup signals a shift toward a “wartime” footing where the government demands total submission from Silicon Valley.¹⁰ We also need to monitor whether this pressure leads to further resignations within Anthropic, as the company’s head of safeguards research recently departed while warning that the current path of AI deployment puts the world “in peril.”¹¹

This conflict matters because it will likely determine whether the future of warfare is governed by the ethical frameworks of the scientists who build AI or the tactical requirements of the generals who deploy it. If the Pentagon successfully forces Anthropic to yield, it establishes that no private AI developer can maintain “red lines” that conflict with state interests, potentially clearing the way for the very autonomous weapons and mass surveillance systems that safety advocates fear.¹² Conversely, if Anthropic stands its ground and loses its $200 million contract, it may lose its seat at the table, allowing less-restricted models from competitors to become the default engines of American national security.¹³ Ultimately, the outcome of this Friday’s deadline will define the limits of corporate ethics in the face of state power and could accelerate an AI arms race where safety guardrails are increasingly viewed as strategic liabilities.¹⁴

References

- Hegseth sets Friday deadline for Anthropic to drop AI safeguards or face Pentagon action

- Hegseth warns Anthropic to let the military use the company’s AI tech as it sees fit

- AI vs military: This showdown can shape the future of war

- The Pentagon challenges AI ethics

- Pentagon wants Anthropic to loosen restrictions on classified AI use cases

- Pentagon gives Anthropic 3 days to drop AI safeguards or face blacklisting

- Pentagon threatens to make Anthropic a pariah if it refuses to drop AI guardrails

- Hegseth threatens to blackball Anthropic AI

- Pentagon sets Friday deadline for Anthropic to abandon ethics rules for AI — or else

- US defense chief gives Anthropic Friday deadline to drop AI safeguards

- Anthropic’s Claude AI faces Pentagon ultimatum from US Defense Sec Pete Hegseth

- Hegseth Summons Anthropic CEO Amid Dispute Over Using AI in Military

- Pete Hegseth gives Anthropic Friday deadline over Military AI limits

- Pentagon Sets Friday Deadline for Anthropic to Grant Unrestricted AI Access or Face Penalties

Update 2/25/26 at 3:52 PM (HST): “Anthropic’s previous policy stipulated that it should pause training more powerful models if their capabilities outstripped the company’s ability to control them and ensure their safety — a measure that’s been removed in the new policy…. Anthropic’s new safety policy includes a ‘Frontier Safety Roadmap’ that outlines the company’s self-imposed guidelines and safeguards. But the company acknowledged the new framework is more flexible than its past policy…. Jared Kaplan, Anthropic’s chief science officer [said,] ‘We felt that it wouldn’t actually help anyone for us to stop training AI models, We didn’t really feel, with the rapid advance of AI, that it made sense for us to make unilateral commitments … if competitors are blazing ahead.'” -Clare Duffy & Lisa Eadicicco, “Anthropic ditches its core safety promise in the middle of an AI red line fight with the Pentagon,” CNN Business, 25 Feb 2026.

[End]

Filed under: Uncategorized |

Leave a comment