By Jim Shimabukuro (assisted by Claude)

Editor

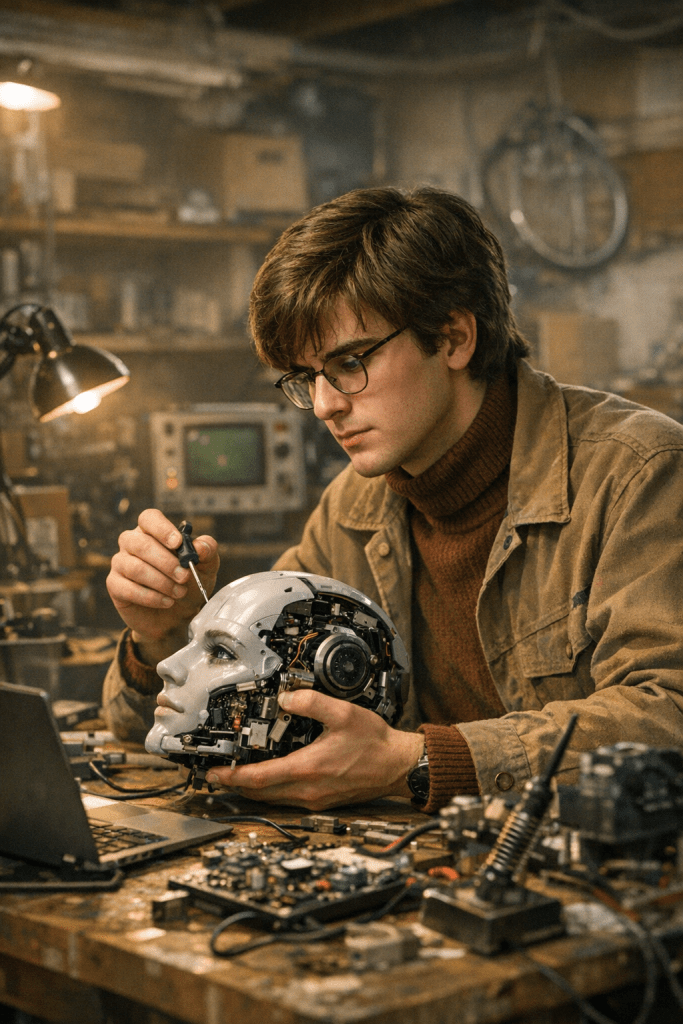

The history of technology is not written primarily by the powerful. It is written by the restless. Steve Jobs and Steve Wozniak assembled the Apple I in a California garage. Bill Gates and Paul Allen wrote their BASIC interpreter in a college dorm room before any computer existed to run it. The disruptors of every technological era tend to arrive from the margins — not because the center is incompetent, but because the center is invested in the status quo. They cannot afford to imagine the world differently. The outsiders can.

Artificial intelligence in 2026 presents exactly this dynamic. A small number of extraordinarily well-funded corporations — OpenAI, Google DeepMind, Anthropic, Meta, and a handful of others — control the frontier of model development, consuming billions in compute and attracting the world’s top researchers. And yet, hovering at the edges of this ecosystem, a different kind of builder is at work. These are individuals who are not primarily motivated by quarterly valuations or platform lock-in. They are motivated by ideas: ideas about ownership, access, the nature of intelligence itself, and who gets to benefit from the most consequential technology of our age.

As of 9 March 2026, three noteworthy disruptors are George Hotz, Andrej Karpathy, and Emad Mostaque. Each has a distinct background, a distinct vision, and a distinct theory of disruption. What they share is an orientation toward openness, a distrust of centralization, and a stubborn belief that the future of AI does not have to be decided behind closed doors.

George Hotz — The Anarchist Engineer

George Hotz — known online as geohot — is one of the most consequential and deliberately overlooked figures in the history of computing. At seventeen, he became the first person to carrier-unlock the iPhone, achieving in weeks what teams of engineers at Apple and AT&T had considered essentially impossible. At twenty-two, he reverse-engineered Sony’s PlayStation 3, gained hypervisor-level access to the hardware, and published the exploit publicly — earning a lawsuit from Sony and a settled agreement that most people in his position would have found ruinous.1

Born in the early 1990s, Hotz grew up as a competition-minded computer prodigy. He was a finalist at the Intel International Science and Engineering Fair in high school2 and later briefly attended Carnegie Mellon University before dropping out in a characteristically impatient exit. The theme of his career has never been institutional affiliation — it has been proof of concept. Hotz operates from the conviction that large organizations, whether corporations or governments, are constitutionally unable to build certain kinds of elegant, fast, or honest technology. And he has spent the better part of a decade proving it.

Hotz represents a particular archetype — the hacker-philosopher — that the mainstream AI industry has largely failed to produce. He is not primarily a researcher, an executive, or a fundraiser. He is a builder who thinks about building, and who publishes that thinking in real time via livestreamed programming sessions watched by tens of thousands of followers.3

In 2015, Hotz founded comma.ai with a simple and audacious premise: he would build a better autonomous driving system than Tesla’s Autopilot, funded by approximately three million dollars in seed money from Andreessen Horowitz. Tesla had hundreds of millions of dollars and thousands of engineers. Hotz had a small team and a retrofitted Honda Civic.4

The product that emerged — openpilot — has, as of 2025, accumulated over 100 million miles of real-world autonomous driving data and supports more than 325 vehicle models.5 It works by using a camera-equipped device, the Comma 3X, installed beneath the rearview mirror of compatible vehicles. The system runs a neural network trained on community-uploaded driving data, making decisions about steering, acceleration, and braking in a genuinely end-to-end fashion — without the modular pipeline of separate perception, prediction, and planning subsystems used by competitors like Waymo.6

The implications of this architecture are profound. In April 2024, openpilot set a new record for the semi-autonomous Cannonball Run — a coast-to-coast drive across the United States — using a 2017 Toyota Prius, traveling 43 hours and 18 minutes at 98.4% autonomy.7 This was not a demonstration by a company with a valuation in the tens of billions. It was a community-driven feat enabled by open-source software running on consumer hardware that costs a few hundred dollars.

Hotz stepped down from day-to-day leadership of comma.ai in October 2022 and departed the company’s board entirely in November 2025, citing a familiar restlessness: the company had matured beyond the kind of problem he found interesting.2 He founded tiny corp, a new venture whose stated goal is to build an alternative to NVIDIA’s hardware monopoly on AI compute. The flagship product of tiny corp’s software stack is tinygrad, a minimalist deep learning framework that Hotz started as, in his own framing, a slightly irreverent proof that the entire ecosystem of machine learning libraries — TensorFlow, PyTorch, and the rest — was more complicated than it needed to be.

Tinygrad began as a project of fewer than a thousand lines of Python. It has since grown into a legitimate, performant engine capable of running real-world models including inference for large language models.8 The hardware counterpart, the Tinybox — a $15,000 desktop AI computer aimed at local model training and inference — represents something even more ideologically charged: a personal compute cluster for the individual, at a time when the industry is racing toward centralized cloud infrastructure and proprietary data centers.2

Hotz’s disruption operates on two levels. The first is technical. His work on tinygrad challenges the assumption that machine learning frameworks must be complex, opaque, and optimized for the specific hardware of a single vendor. If tinygrad or a successor proves capable of matching PyTorch’s performance while maintaining its radical simplicity, it could dramatically lower the barrier to entry for hardware-level AI development — potentially breaking NVIDIA’s stranglehold on the AI compute stack. Hotz has said publicly that he believes there is a tenfold performance improvement available to those willing to approach the problem from first principles rather than from the accumulated technical debt of existing frameworks.9

The second level of disruption is ideological. Hotz has articulated, as clearly as anyone in the field, the argument that the centralization of AI in a few corporate data centers represents a fundamental mistake — one analogous to if the early internet had been designed to run only on IBM mainframes.8 His work on the Tinybox and tinygrad is a hardware-level argument that intelligence should be distributed, personal, and open. This philosophy aligns with a growing movement in the AI landscape, where open-source models are beginning to close the capability gap with proprietary ones,10 and where questions of compute sovereignty are increasingly treated as national security matters.

The analogy to the early personal computing revolution is not accidental. Hotz himself has drawn it explicitly. Wozniak built the Apple I not because he thought it would be a product, but because he wanted a computer of his own. Hotz is building the Tinybox not primarily to compete with NVIDIA’s H100 — which sells for far more and delivers far greater raw performance — but to prove that the shape of the compute ecosystem does not have to be a few enormous machines owned by a few enormous companies. Whether the market ultimately agrees with him is a separate question. What is beyond doubt is that he is asking the right question at the right time, and that the question is already reshaping how a generation of builders thinks about the infrastructure of intelligence.

Andrej Karpathy — The Great Demystifier

If George Hotz represents the hacker tradition — disruption through code and confrontation — Andrej Karpathy represents something equally powerful and considerably rarer: disruption through understanding. Karpathy is the most effective communicator of how modern artificial intelligence actually works, and the full implications of that role are only beginning to be felt.

Born in Bratislava, Czechoslovakia in 1986, Karpathy moved with his family to Toronto at fifteen.11 He completed bachelor’s degrees in computer science and physics at the University of Toronto, a master’s at the University of British Columbia, and a PhD at Stanford under the supervision of Fei-Fei Li — one of the most influential AI researchers of the deep learning era. At Stanford, Karpathy designed and led CS231n: Convolutional Neural Networks for Visual Recognition, which grew from 150 enrolled students in its first year to more than 750 in its third,12 becoming one of the most widely watched online courses in the history of the field.

From Stanford, Karpathy went directly to co-found OpenAI in 2016, before departing in 2017 to become Director of AI at Tesla, where he led the computer vision team responsible for Tesla Autopilot. The team developed all in-house data labeling, neural network training, and deployment on Tesla’s custom inference chip.13 He rejoined OpenAI briefly in 2023 before quietly exiting to pursue what he called, with characteristic understatement, his “personal projects.”

What those projects look like, for anyone who has followed Karpathy’s career, was not mysterious. He had been teaching and building educational tools for AI throughout his entire professional life, always as a side project. The question was only whether he would finally do it full time.

In July 2024, Karpathy announced the founding of Eureka Labs with the declared ambition of building “a new kind of school that is AI native.”14 The premise is elegant and the problem it addresses is one of the most genuine constraints on human progress: the scarcity of great teachers. Karpathy has described the ideal learning experience as having access to a subject-matter expert who is “deeply passionate, great at teaching, infinitely patient and fluent in all of the world’s languages” — but noted that such experts “are very scarce and cannot personally tutor all 8 billion of us on demand.”15

Eureka Labs is Karpathy’s attempt to solve this problem structurally. The platform envisions a model in which expert teachers design high-quality course materials, which are then scaled and guided by an AI teaching assistant — a kind of personalized tutor that can answer questions, adapt to the student’s pace, and maintain the pedagogical intent of the original curriculum. The first product, LLM101n, is an undergraduate-level course teaching students how to build their own large language model from scratch.16 It is, in miniature, a demonstration of the Eureka Labs concept: it is meant to be what the AI teaching assistant would teach — a smaller version of the intelligence that would eventually run the platform.

Alongside Eureka Labs, Karpathy has continued his direct educational work. His YouTube series — particularly the “Neural Networks: Zero to Hero” playlist and his 2025 video “Deep Dive into LLMs like ChatGPT” — has introduced the architecture of modern AI to an audience that most AI companies have made no effort to reach. These are not simplified pop-science explanations. They are rigorous, code-level walkthroughs that treat the viewer as an intelligent adult capable of understanding the actual mathematics.

In December 2025, Karpathy published his “2025 LLM Year in Review,” which was widely circulated in the AI research community and treated as something close to an authoritative diagnosis of where the field stood. He identified the emergence of Reinforcement Learning from Verifiable Rewards (RLVR) as the most consequential development of the year — a new training methodology that forces models to generate “reasoning traces” similar to human thinking by training against objective, automatically verifiable reward functions rather than human preference signals.17 He also coined the term “vibe coding” in early 2025 to describe the emerging practice of building software through natural language interaction with AI,11 a term that has since entered common usage across the industry.

Karpathy’s public diagnosis of the field has carried an important corrective: he has argued that despite rapid benchmark performance, humans have currently exploited less than 10% of the potential of the current computing paradigm,18 and that the competition in 2026 will shift from raw compute to deeper exploration of how AI can reason efficiently. These are not the words of someone building inside the industry’s established incentive structures. They are the words of someone thinking about the field from the outside and with a longer time horizon.

The disruption Karpathy represents is not immediately legible in the conventional language of market disruption. He is not directly competing with OpenAI or Google DeepMind for model capabilities. He is not raising hundreds of millions of dollars to build alternative foundation models. What he is doing is arguably more powerful: he is changing who can participate in the conversation.

The history of transformative technology is in part a history of legibility. When programming languages became high-level, the population of software developers expanded by an order of magnitude. When the graphical user interface arrived, the population of computer users expanded by several orders of magnitude. Karpathy’s project is to perform the same transformation for AI development: to make the underlying mechanics of modern machine learning accessible to anyone with curiosity and a few weeks to spare.

If Eureka Labs succeeds in building a truly scalable AI-native educational platform, the implications extend well beyond AI education. The platform could, in principle, be applied to physics, medicine, law, mathematics — any domain in which the shortage of expert teachers creates a structural bottleneck on human development. This is not a niche market. It is arguably the largest market that has ever existed: the education of the entire human population.15

Karpathy’s influence on the next generation of AI researchers is already measurable. His courses have shaped the intellectual formation of thousands of engineers now working at leading AI labs. His public writing and video work continue to set the interpretive frame through which many practitioners understand the field’s direction. If the future of AI is indeed shaped by the people who build it, and if those people were educated by Karpathy, then his influence on the field may ultimately exceed that of anyone operating within the industry’s institutional structures.

Emad Mostaque — The Decentralist

Emad Mostaque is the most controversial figure in this collection, and perhaps the most instructive. His career is a case study in what happens when a visionary with an unusually accurate sense of where technology is going collides with the institutional structures of a corporate startup — and the collision is not pretty. But the story does not end there. After his departure from Stability AI in March 2024, Mostaque has re-emerged as the architect of something considerably more radical than anything he attempted inside a corporate framework.

Born in Jordan in 1983 to a Bengali Muslim family, Mostaque was raised in Dhaka and moved to the United Kingdom at age seven.19 He earned a master’s degree in mathematics and computer science from the University of Oxford and spent over a decade as a hedge fund manager, developing a facility for quantitative analysis and a useful detachment from the techno-optimism that characterizes most AI founders. His entry into AI was personal rather than professional: after his son was diagnosed with autism, Mostaque began exploring how artificial intelligence could help analyze existing research and identify patterns in complex biological data.20

In late 2020, Mostaque co-founded Stability AI with the explicit mission of making foundational AI technology accessible to all. The company’s most consequential act was the open-source release of Stable Diffusion in August 2022, a text-to-image model that had originated as an academic research project at Ludwig Maximilian University in Munich. Stability AI provided the computational resources to scale the research; when the model was released freely to the public, it was downloaded millions of times and spawned an ecosystem of creative tools that fundamentally changed the economics of visual AI.21 Unlike competitors DALL-E and Midjourney, which operated behind paywalls, Stable Diffusion was immediately available to artists, researchers, and hobbyists worldwide.

Mostaque’s departure from Stability AI in March 2024 was messy. Investors had grown frustrated with his management style, key technical staff had resigned, and a co-founder had filed suit.22 The institutional failure was real. But the institutional failure is also, in a specific sense, beside the point. The open-source release of Stable Diffusion — whatever Mostaque’s management failures — was a genuine act of technological redistribution. It changed who could create visual AI, and it demonstrated that an organization outside the major tech companies could release a frontier model to the world without restriction.

Since leaving Stability AI, Mostaque has founded Intelligent Internet (ii.inc) and published, in July 2025, a Master Plan and accompanying whitepaper outlining his vision for a decentralized AI infrastructure.23 The scope of this vision is extraordinary: Mostaque is not proposing a new app, a new model, or a new company in the conventional sense. He is proposing a new economic and governance layer for artificial intelligence — a protocol, in the language of blockchain technology, that would make AI a public utility rather than a private service.

The architecture of Intelligent Internet rests on several interlocking components. The core is the II-Agent: an open-source, sovereign AI assistant that, in Mostaque’s vision, every person on Earth would own rather than rent. The agent’s architecture uses a function-calling paradigm for reasoning, tool selection, and execution, and features advanced context management for up to 120,000 tokens. On benchmark testing as of 2025, II-Agent achieved approximately 71% accuracy on the GAIA (General AI Assistants) benchmark, outperforming several contemporary commercial agents.24

In February 2026, Intelligent Internet released II-Agent V1, described as the first production-ready version of their open-source intelligent assistant, with benchmark scores of 61.8% on Terminal Bench and 45.1% on SWE Bench Pro.23 The release also included II-Agent Chat, a multi-model interface allowing users to work across major foundation models — including Gemini 3, Claude Sonnet, and GPT-5 — in a single thread, using their own API keys.

Mostaque’s economic philosophy, developed in his book The Last Economy (released August 2025), argues that the imminent abundance of machine intelligence will fundamentally reshape the economy and collapse the value of human cognitive labor.25 This is not a new argument; versions of it have circulated in AI discourse for years. What is distinctive about Mostaque’s framing is his insistence on the infrastructure question: who will own the AI when cognitive labor becomes economically abundant? His answer is explicit — either individuals own their AI, or a handful of corporations will own everyone’s AI, and the difference between those two outcomes is civilizational.

The Intelligent Internet protocol is designed around what Mostaque calls a “Proof of Benefit” system — an economic layer that compensates contributors to the shared AI infrastructure rather than extracting value from users.26 This is paired with “Common Ground,” a coordination layer for AI agent interaction, and a data foundation built on open, auditable knowledge rather than scraped internet data. As of early 2026, Mostaque has argued publicly that AI agents will go mainstream in 2026 and that the future of AI lies beyond the transformer architecture entirely — a significant technical claim that, if accurate, would represent a fundamental shift in the infrastructure of the field.27

Mostaque’s disruption, if it arrives, would be structural rather than technological. He is not proposing to build a better large language model. He is proposing to change the ownership structure of AI — to move intelligence from being a service that corporations provide to users, to being infrastructure that individuals and communities own.

The open-source AI ecosystem, as it exists in 2026, already demonstrates that the capability gap between open and closed models is narrowing rapidly.28 Mostaque’s insight is that capability parity is not, by itself, sufficient for meaningful democratization. A world in which open-weight models are freely available but run exclusively on proprietary cloud infrastructure still concentrates power in the hands of the infrastructure owners. The deeper disruption requires a new economic layer — something like what the internet’s TCP/IP protocols provided for communication: a shared, neutral, open infrastructure on which anyone can build.

This is an enormous ambition, and Mostaque’s track record as a manager suggests genuine reasons for caution. But the history of disruptive technology is full of messengers whose personal failures were eventually overshadowed by the validity of their message. The open-source release of Stable Diffusion, which Mostaque championed, has already proved that the argument for open AI is not merely philosophical — it is technically and commercially viable. The question his current work poses is whether that argument can be extended from individual models to the entire infrastructure layer of artificial intelligence.

If the answer is yes — if the Intelligent Internet protocol, or something inspired by it, establishes a genuinely decentralized substrate for AI — the implications would exceed anything achieved by the commercial AI companies. It would represent not a disruption of the AI industry but a dissolution of the conditions under which the AI industry, as currently constituted, can exist. That is the scale at which Mostaque is thinking. Whether he is the person who executes it, or simply the person who articulated it clearly enough that someone else eventually does, his contribution to the question may prove to be the most consequential of any figure in this document.

References

2. George Hotz — Wikipedia (updated February 2026)

3. George Hotz Twitch and YouTube — public programming livestreams

4. George Hotz of Comma.ai Wants to Open Source Your Car — Fortune (archived)

5. openpilot — Wikipedia (updated December 2025)

6. Why Ex-Hacker George Hotz is Giving Away Self-Driving Software — IEEE Spectrum

7. openpilot Wikipedia: Cannonball Run record entry

8. George Hotz, Comma AI, and the Rise of Tinybox — Semperfly Substack (May 2025)

9. Comma AI Founder George Hotz Is Stepping Down — AutoEvolution (November 2022)

11. Andrej Karpathy — Wikipedia (updated 2026)

12. Andrej Karpathy Personal Website — karpathy.ai

13. Andrej Karpathy — Official Biography (karpathy.ai)

15. OpenAI Co-Founder Andrej Karpathy Announces Eureka Labs, an AI Education Startup — Inc. (July 2024)

17. 2025 LLM Year in Review from Andrej Karpathy — MLOps Substack (January 2026)

18. AI Guru Andrej Karpathy Unveils 2025 Annual Summary — 36kr English (December 2025)

19. Emad Mostaque — Wikipedia (updated March 2026)

21. Emad Mostaque: The ‘Secret Agent’ Who Lost His AI Empire — AI Discoveries (2025)

22. Emad Mostaque — IQ.wiki (updated 2025)

23. Intelligent Internet Master Plan (open source, not so secret) — ii.inc blog (updated February 2026)

24. Intelligent Internet — IQ.wiki (2025)

25. Intelligent Internet — IQ.wiki: The Last Economy entry (August 2025)

26. Intelligent Internet Whitepaper — ii.inc

]End]

Filed under: Uncategorized |

Leave a comment