By Jim Shimabukuro (assisted by Perplexity)

Editor

Jensen Huang’s GTC2026 keynote framed “physical AI” and robotics not as a side bet but as the next multi‑trillion‑dollar wave of the AI economy, continuous with today’s datacenters rather than a separate field.1,4 In both NVIDIA’s own recap and detailed press coverage, he cast robots, autonomous vehicles, and industrial automation as the natural endpoint of an “AI factory” stack where gigawatt‑scale infrastructure produces models that flow into embodied systems, arguing that the next gold rush after digital agents will be robots and other “physical AI” burning even more data and compute.3,4,6 This is less about a new technical thesis than a macro‑industrial one: embodied AI is presented as an infrastructure market similar in scale and inevitability to cloud and GPUs, with NVIDIA positioning itself as the full‑stack vendor from energy to humanoid controllers. In that sense, Huang’s message differs from classic robotics talks by making physical AI primarily an inference and datacenter story, with robots as endpoints of a vertically integrated pipeline rather than standalone machines.3,4

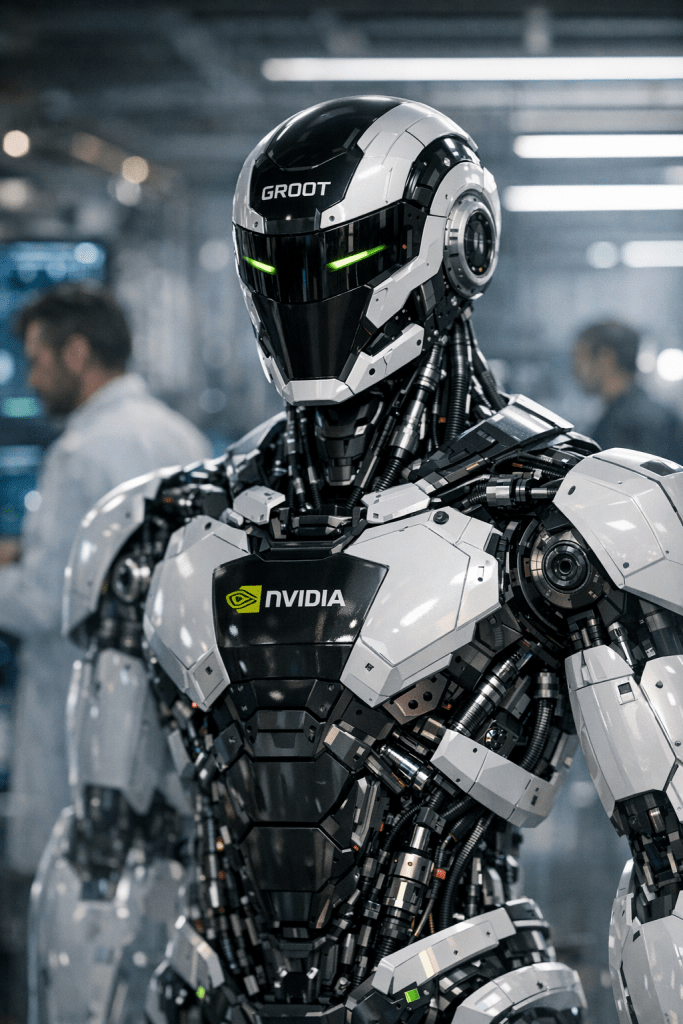

Within that narrative, he highlighted NVIDIA Isaac and the GR00T humanoid foundation model as core “operating systems” for embodied intelligence, folding them into the same foundation‑model logic that underpins language models.3,6 The Olaf robot demo—animated character, Jetson in its “tummy,” learning to walk entirely inside Omniverse—served to dramatize his claim that simulation is now the training ground for physical AI, not just a visualization tool.⁶ Tom’s Guide and Quartz both emphasize that in Huang’s telling, what matters is that everything on stage, from humanoids to animated figures, was learned and orchestrated in simulation, reinforcing his long‑standing belief that synthetic worlds plus massive compute can bootstrap useful real‑world skills.1,4,6 That emphasis implicitly downplays hard‑ware, contact mechanics, and on‑robot experimentation in favor of treating robots as inference clients for cloud‑trained world models, a view that meshes with NVIDIA’s broader focus on Omniverse and CUDA‑like software moats.3,4,6

Compared with Fei‑Fei Li, Huang’s view overlaps on the importance of world models and spatial understanding but differs in framing and emphasis. Li’s recent work and public writing argue that the “next frontier” is spatial intelligence—world models that understand objects, causality, and physical constraints so AI can coordinate robots, logistics, and complex environments—explicitly contrasting this with purely text‑trained systems.11 She stresses that humans are “embodied agents” who learn through movement and consequence, and that current language‑centric AI lacks grounding, so the key step is building models that simulate physics and uncertainty before acting.11,17 In that sense, Li treats embodied AI primarily as an epistemic challenge—how to make AI understand the world—whereas Huang packages similar ideas into an industrial narrative about AI factories and physical AI markets.3,4,11 Both see simulation as a strategic advantage, but Li’s writing leans toward careful, human‑centric deployment and governance, while Huang talks in the language of multi‑trillion‑dollar opportunities and vertical integration.3,4,11,14

Rodney Brooks offers a near‑mirror‑image perspective to Huang’s optimism, particularly around humanoids. In late‑2025 essays and interviews he argues that deployable dexterity will remain “pathetic” compared with human hands through at least the mid‑2030s, that today’s walking humanoids will be too unsafe for close human interaction without new mechanical systems, and that billions now pouring into humanoid startups will mostly be lost. He is especially skeptical that vision‑heavy neural‑network approaches can solve manipulation without a deeper representation of touch and contact, predicting that claims about humanoids in 10% of households by 2030 are simply unrealistic.12,15,1,8 Where Huang’s GTC message treats humanoid foundation models and simulation‑trained robots as commercially near‑term pillars of a new infrastructure layer, Brooks frames the same wave of investment as a hype cycle that underestimates hardware, safety, and scaling constraints. Thus, while both care about robots in real environments, Brooks insists on incremental, domain‑specific machines (wheeled platforms with arms, tightly scoped tasks), whereas Huang bundles humanoids and other robots together under a general “physical AI” banner that he implies will scale much faster.3,12,15

Gill Pratt and Toyota Research Institute occupy a middle ground that again highlights how distinctive Huang’s emphasis is. Pratt’s public statements and 2025 discussions focus on “amplifying people rather than replacing them,” using generative‑AI‑based “Large Behavior Models” to teach robots useful, dexterous skills in ways that integrate into human workflows. TRI describes its robotics program as rooted in jidoka—automation with a human touch—and frames humanoid or humanoid‑like machines as assistive tools that help people remain productive and independent, especially in aging societies. Compared with Huang, Pratt downplays datacenter narratives and instead talks about co‑working, safety, and incremental capability gains; compared with Brooks, he is more optimistic about learning‑based dexterity but more conservative than Huang on timelines and roles.13,16,1,9 Huang’s keynote, by contrast, ties embodied AI tightly to NVIDIA’s data‑center businesses, suggesting an almost continuous escalation from chatbots to office agents to warehouse robots, and uses partners like Lilly’s AI factory deployment to suggest that “physical AI is no longer theoretical.”3,4,1,9 That kind of macro framing—robots as another consumption surface for inference tokens—goes beyond the more human‑scale, task‑specific stories told by Pratt or Li.11,13,14,16

What is arguably new in Huang’s 2026 embodied‑AI rhetoric is not a technical insight about embodiment itself but the way he normalizes robots as just another client of a global AI infrastructure and asserts that the “ChatGPT moment for robots” is near once you combine world models, simulation, and compute at scale.7,10,3,4 He has said explicitly in related talks that “without real‑world data, embodied intelligence can only be an illusion,” but pairs that caution with a plan to generate and structure that data via Omniverse, GR00T, and a broad ecosystem of partners from warehouse robots to general‑purpose humanoids.7,10,3,6 This differs from Brooks’s skepticism about learning dexterity from vision alone, from Li’s more research‑oriented push to ground models responsibly in spatial understanding, and from Pratt’s emphasis on human‑centric deployment.11,12,13,15,16,1,9 It matters because NVIDIA is effectively trying to do for embodied AI what it did for GPU‑accelerated deep learning: define the default stack, convince investors and industry that the market is infrastructure‑scale and imminent, and thereby steer capital, research, and policy choices toward large‑scale simulated training and cloud‑connected robots.3,4,6,10 If that narrative holds, we should expect aggressive investment into platforms that treat robots as inference endpoints for datacenter‑trained world models, possibly at the expense of more heterogeneous, smaller‑scale, or non‑cloud‑centric approaches that voices like Brooks and, in a different way, Li and Pratt continue to highlight.11,12,13,15,16

References

- “Nvidia GTC 2026 LIVE — Jensen Huang reveals DLSS 5, OpenClaw partnership, and an Olaf robot,” Tom’s Guide, 2026. https://www.tomsguide.com/computing/live/nvidia-gtc-2026-live

- “Nvidia GTC 2026 Live Blog: Jensen Huang’s Keynote, Hardware Reveals, and More AI News,” TechRepublic, 2026. https://www.techrepublic.com/article/news-nvidia-gtc-2026-live-updates/

- “Jensen Huang GTC 2026 Keynote: Everything NVIDIA Announced,” Oplexa, 2026. https://oplexa.com/jensen-huang-gtc-2026-keynote-nvidia-announcements/

- “Jensen Huang says the next AI boom belongs to inference,” Quartz, 2026. https://qz.com/nvidia-gtc-2026-jensen-huang-keynote-takeaways

- “NVIDIA GTC 2026: Live Updates on What’s Next in AI,” NVIDIA Blog, 2026. https://blogs.nvidia.com/blog/gtc-2026-news/

- “NVIDIA GTC Keynote 2026,” YouTube (keynote video), 2026. https://www.youtube.com/watch?v=jw_o0xr8MWU

- “CES 2026 NVIDIA CEO Jensen Huang’s Keynote Highlights,” moomoo community, 2026. https://www.moomoo.com/community/feed/key-content-of-the-ces-2026-speech-by-nvidia-ceo-115846361120773

- “Jensen Huang’s Single Statement Triggers Another Frenzy,” 36Kr Global, 2026. https://eu.36kr.com/en/p/3632969424356355

- “Fei-Fei Li Ushers In Next Frontier Of Artificial Intelligence,” Forbes, 2025. https://www.forbes.com/sites/geekgirlrising/2025/11/20/fei-fei-li-ushers-in-ais-next-frontier-spatial-intelligence/

- “From Words to Worlds: Spatial Intelligence is AI’s Next Frontier,” Fei-Fei Li, Substack, 2025. https://drfeifei.substack.com/p/from-words-to-worlds-spatial-intelligence

- “Fei-Fei Li sparked an AI boom — now she won’t let humans fall behind,” USA Today, 2026. https://www.usatoday.com/story/tech/2026/03/02/fei-fei-li-women-of-the-year-2026/88655019007/

- “Rodney Brooks, the Godfather of Modern Robotics, Says the Field Is Still Not Ready for Humanoid Helpers,” New York Times, 2025. https://www.nytimes.com/2025/12/14/business/rodney-brooks-robots-roomba.html

- “Why Today’s Humanoids Won’t Learn Dexterity,” Rodney Brooks blog, 2025. http://www.rodneybrooks.com/why-todays-humanoids-wont-learn-dexterity/

- “Blog – Rodney Brooks” (2025 predictions on humanoid robots), Rodney Brooks blog, 2025. https://rodneybrooks.com/blog/

- “The Last Word: Toyota Research Institute’s Gill Pratt on Using Generative AI to Teach Robots New Dexte…,” Dallas Innovates, 2023 (context for TRI’s approach, still referenced 2025–26). https://dallasinnovates.com/the-last-word-toyota-research-institutes-gill-pratt-on-using-generative-ai-to-teach-robots-new-dexterous-skills/

- “Toyota joins race for humanoid robot workers, pledges to amplify not replace people,” Toyota Research Institute / Automotive News summary, 2025. http://www.tri.global/news/toyota-joins-race-humanoid-robot-workers-pledges-amplify-not-replace-people

- “Developing Intelligent Machines for a Human-Centric Future,” Ride AI 2025 talk with Gill Pratt, 2025. https://www.youtube.com/watch?v=xFGdVSVJ82s

[End]

Filed under: Uncategorized |

Leave a comment