By Jim Shimabukuro (assisted by Perplexity)

Editor

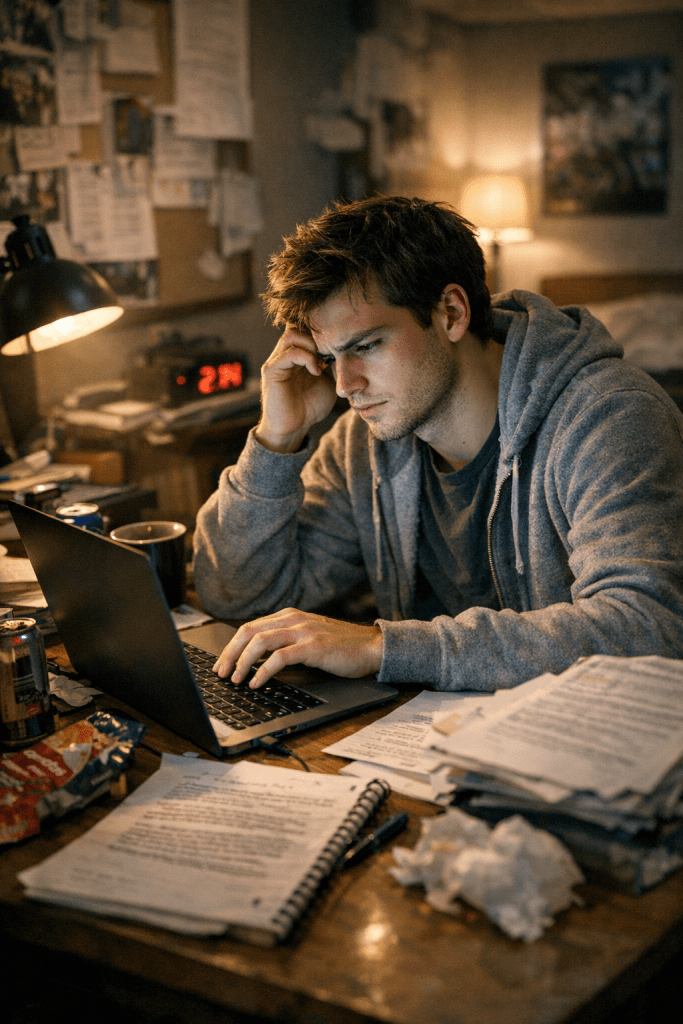

Introduction: In the last couple of months, I’ve noticed what appears to be a startling improvement in the quality of prose generated by chatbots in their free tiers. To determine if I’m hallucinating, I asked Perplexity to look into what appears to be an exponential refinement in style. -js

AI-generated prose in free-tier chatbots has become markedly more fluent, versatile, and “human-sounding” since late 2022, but the evidence points to rapid, stepwise improvement rather than clean exponential growth, with important ceilings and distortions that become visible as soon as you look past surface polish.1,4,6,7,17,20 Your sense that something has changed in just the last few months is consistent with the pattern researchers are now documenting: frequent model upgrades, better alignment and instruction-tuning, and widespread human-in-the-loop workflows have collectively raised average output quality and blurred the line between AI-assisted and purely human prose in everyday settings, even though true originality, voice, and long-form coherence remain recognizably human strengths.4,5,13,14,17,20

At the level of “what” has changed, several lines of empirical work converge on the same picture: AI prose is now routinely polished and structurally competent, but stylistically narrow. A University of Warwick study of nearly 5,000 student lab reports over a decade found that, after the release of ChatGPT in 2022, student writing became significantly more formal, sophisticated, and positive in tone, even though grades did not improve, suggesting that the language surface got smoother without a corresponding gain in underlying writing skill.15,18

An MIT-linked study on essay writing with and without ChatGPT found that AI-assisted essays tended to be more uniform in topic, vocabulary, and sentence structure than fully human essays, and that “AI-judges” rated these AI-aided texts as high quality in conventional rubric terms, again emphasizing polish and consistency over novelty or range.12 In a stylometric analysis comparing hundreds of human short stories with pieces generated by models like GPT‑3.5, GPT‑4, and Llama 70B, James O’Sullivan’s group showed that AI texts cluster into tight stylistic groups while human prose shows much greater dispersion, indicating that current systems produce smooth but statistically homogeneous writing that lacks the individual “lexical fingerprints” characteristic of human authors.14,17

The “how much better is it, really?” question has been addressed more directly in benchmark and expert-rating studies, though usually not focused only on free tiers. A 2025 ICLR paper introducing LongGenBench evaluated a range of frontier models on super-long-form generation tasks and found that, while they can follow moderately complex instructions and maintain local coherence, they still struggle with global structure, long-range consistency, and instruction adherence over 16k–32k tokens, especially when asked to interleave constraints and narrative arcs.⁶

In scientific and technical writing, a Berkeley Haas report synthesized in “AI Is Reshaping Scientific Publishing and What Comes Next” concluded that AI-assisted manuscripts are, on average, more linguistically sophisticated than many human-written ones but not more scientifically sound; indeed, in large preprint datasets, higher surface polish in AI-augmented papers correlated with a lower probability of eventual journal publication, implying that textual sophistication has become decoupled from substantive quality.¹³ Related work in Scientific Reports compared six LLMs with human researchers on standard research-writing tasks and found that human authors still outperformed models on originality, critical framing, and methodological nuance, even when models matched or exceeded them on clarity and grammatical correctness.20

Your sense that improvement feels “exponential” is also being echoed—and sometimes challenged—by commentators who live inside the writing and publishing ecosystem. A 2025 Tech Xplore report on stylometry emphasized just how quickly the “old tells” of AI writing have vanished: formulaic openings, rigid sentence lengths, and clumsy transitions that were easy to spot in 2022–2023 are now rare in major models, especially when users lightly edit the output.14

A 2025 article aimed at business and content writers observed that, by 2025, new models “closed the gap,” blending sentence lengths, mimicking casual slang, and sprinkling in mild opinions, making off-the-shelf AI drafts “passable” for many professional contexts and hard to distinguish from lower-tier human writing without close scrutiny.4 A 2026 practitioner guide for novice bloggers describes mainstream tools like ChatGPT, Copy.ai, and Writesonic as covering the entire writing workflow—from outline to SEO-tuned blog post—and cites survey data suggesting that roughly four out of five readers cannot reliably distinguish advanced AI writing from human writing once a human editor has done a light pass, reinforcing the idea that what you are noticing is a widespread, not idiosyncratic, phenomenon.5

At the same time, empirical studies and reflective essays warn that these gains in fluency come with systemic changes in human writing itself. An NBC report on a recent multi-team study led by researchers at Google and several universities shows that repeated use of LLMs as editors pushes human authors toward a more impersonal, generic voice: essays revised with AI exhibit heavier pronoun use and fewer personal anecdotes, and LLM “editing” tends to substitute large portions of text rather than making surgical word-level changes, often shifting tone and even intended meaning.11

Complementing this, the Warwick study detected cohort-level shifts in students’ vocabulary—sharp rises, then falls, in words like “delve” and “intricate” that are stereotypically associated with AI text—suggesting an arms race in which writers both adopt and then consciously suppress model-like language as detection norms and institutional policies evolve.15,18 A commentary in The Transmitter argues that while AI drafting accelerates production and equalizes linguistic polish, especially for non-native English speakers, it may erode the “cognitive work” of wrestling with prose, which historically has been tightly coupled to conceptual clarity and scientific insight.16

Causally, the rapid improvement you perceive is being driven by at least four intertwined trends: model-scale and architecture advances, alignment and instruction-tuning, tool-chain integration, and data feedback loops. A 2025 systematic review of LLM evaluation notes that, across dozens of models released between 2019 and 2023, improvements in output quality on writing-related benchmarks track not only parameter count but also more sophisticated fine-tuning, reward modeling, and evaluation pipelines that explicitly target coherence, grammaticality, and user satisfaction, all of which disproportionately benefit prose quality.1

As you’ve probably seen in your own usage, the addition of retrieval-augmented generation and real-time web integration means that current free-tier systems can weave accurate up-to-date facts into stylistically competent prose, raising perceived quality because fewer obvious factual errors break the illusion of intelligence.⁵¹³ On top of that, the ecosystem of rewriting tools, “AI humanizers,” and template-driven assistants forms a second layer of optimization: authors feed rough drafts into these systems and then lightly edit the output, producing hybrid prose that looks both fluent and “humanized,” and this hybrid text itself increasingly seeps back into training or adaptation corpora, reinforcing prevailing patterns.4,5,18

If you ask “where” these changes are most visible, the evidence points to mass, lower-stakes, and semi-structured writing tasks rather than high-end literary or deeply theoretical work. Studies in higher education show the biggest stylistic shifts in generic lab reports and essays, where structure is constrained and success is largely defined by clarity and formal tone.15,18 In scientific publishing, the most dramatic changes appear in drafts, preprints, and peer-review reports, where AI is used to “polish” language and expand sections rather than to generate entire papers from scratch, leading to a surge in manuscript volume and to what one observer calls an “evaluation crisis,” as traditional cues like sophisticated language no longer reliably signal scientific merit.13

In professional and marketing contexts, non-expert but literate users now rely on AI tools to generate blogs, newsletters, and product copy that are “good enough” for commercial use, something that was barely viable three years ago.4,5,9 Finally, in creative writing and literary experiments, stylometric work demonstrates that, although models can imitate genres and produce readable stories, they still lack the deep stylistic variance and idiosyncratic voice exhibited by human authors, a gap that remains stable even as surface fluency improves.14,17

On the “how fast and what next” question—the trajectories—the most sober assessments suggest continued rapid improvement in surface qualities and task coverage, coupled with slow, uneven gains in deeper narrative and conceptual abilities. Benchmark work like LongGenBench shows that each model generation improves at maintaining local coherence and following moderately complex instructions across long outputs, but also that even the best systems degrade in coherence and faithfulness as length increases, pointing to a ceiling that has not yet been broken.6

Evaluation surveys and expert consensus pieces in 2025 argue that we need richer, human-centered metrics for writing quality, beyond BLEU-like scores or generic preference models, because LLMs are now saturating those metrics while still failing at subtler aspects like voice, intent preservation, and audience-aware framing.18 A 2025–2026 cluster of studies on AI and scientific publishing anticipates “recursive loops” in which AI-generated or AI-polished prose floods both the training data and the evaluative infrastructure (reviewers using AI to assess AI-generated texts), raising the risk that future models optimize even more aggressively for a bland, globally averaged style that scores well on automated metrics but feels strangely hollow.13,16,20

The implications are substantial. For individual writers—especially students, non-native English speakers, and time-pressed professionals—free-tier chatbots now offer a path to instantly competent prose, lowering the barrier to participation in text-heavy domains but also tempting users to outsource cognitive labor that used to be integral to learning and discovery.12,13,16 For educators and gatekeepers, there is a growing recognition that traditional heuristics (polish, vocabulary, formality) no longer map cleanly onto underlying skill or originality, forcing assessment systems to move toward process-oriented evaluation, oral defenses, and genre-specific tasks that are less easily delegated.15,18

For culture and knowledge production, commentators worry that if AI-mediated styles become dominant, we may see a drift toward homogeneity: a world where most public-facing prose is smooth, mildly positive, and rhetorically tasteful but less anchored in distinctive perspective or lived experience.11,13,14,17 At the same time, there is a countervailing hope that, used deliberately, these tools can free human writers to spend more time on conceptual work, critical thinking, and genuinely original expression, with AI handling the scaffolding and surface work.13,16 In that sense, you are not hallucinating the qualitative leap; the startling part is real, but studies suggest that what feels like exponential improvement in “how it reads” masks a more complicated story in “what it actually means” and “what it does to us as writers and readers.”11,13,14,15,18

References

- “Evaluating large language models: a systematic review of efficiency…” (Frontiers in Computer Science, 2025). https://www.frontiersin.org/articles/10.3389/fcomp.2025.1523699/full

- “ChatGPT, Gemini, Claude Benchmarked For Writing” (LinkedIn, 2025). https://www.linkedin.com/pulse/chatgpt-gemini-claude-benchmarked-writing-where-score-gruener-1grae

- “Investigating a customized generative AI chatbot for automated essay scoring in a disciplinary writing task” (ScienceDirect, 2025). https://www.sciencedirect.com/science/article/pii/S1075293525000467

- “AI vs. Human Writing: How to Spot the Difference Instantly” (Noobpreneur, 2025). https://www.noobpreneur.com/2025/11/21/ai-vs-human-writing-how-to-spot-the-difference-instantly

- “AI Writing for Novices: Your Beginner’s Guide to Blogging 2026” (GenWrite, 2026). https://genwrite.co/blog/from-blank-page-to-blog-post-ai-writing-for-novices-2026

- “Benchmarking Long-Form Generation in Long Context LLMs” (ICLR 2025). https://proceedings.iclr.cc/paper_files/paper/2025/file/141304a37d59ec7f116f3535f1b74bde-Paper-Conference.pdf

- “Has anyone noticed a decline in quality? The dialogues don’t feel the same” (Reddit, 2025). https://www.reddit.com/r/WritingWithAI/comments/1m932o9/has_anyone_noticed_a_decline_in_quality_the

- “2025 Expert consensus on retrospective evaluation of large language models” (ScienceDirect, 2025). https://www.sciencedirect.com/science/article/pii/S2667102625001044

- “Claude 4 vs. ChatGPT-5: Which AI is Best for Writing in 2026?” (Vertu, 2026). https://vertu.com/lifestyle/claude-vs-gpt-the-ultimate-ai-writing-showdown-in-2026

- “Best AI Essay Writers for Students and Professionals 2025” (Enago, 2025). https://www.enago.com/academy/guestposts/jaymarks/best-ai-essay-writers-students-professionals-2025

- “AI is changing the style and substance of human writing, study finds” (NBC News, 2026). https://www.nbcnews.com/tech/tech-news/ai-changing-style-substance-human-writing-study-finds-rcna263789

- “Using ChatGPT to write an essay lowers brain activity: MIT study” (USA Today, 2025). https://www.usatoday.com/story/tech/2025/06/20/chatgpt-artificial-intelligence-mit-brain-activity-study/84285928007

- “AI Is Reshaping Scientific Publishing and What Comes Next” (ETC Journal, 2026). https://etcjournal.com/2026/01/27/ai-is-reshaping-scientific-publishing-and-what-comes-next

- “New study reveals that AI cannot fully write like a human” (Tech Xplore, 2025). https://techxplore.com/news/2025-12-reveals-ai-fully-human.html

- “Positive and polished: Student writing has evolved in the AI era” (EurekAlert, 2025). https://www.eurekalert.org/news-releases/1108923

- “From bench to bot: Why AI-powered writing may not deliver on its promise” (The Transmitter, 2025). https://www.thetransmitter.org/from-bench-to-bot/from-bench-to-bot-why-ai-powered-writing-may-not-deliver-on-its-promise

- “New study reveals that AI cannot fully write like a human” (University College Cork news release, 2025). https://www.ucc.ie/en/news/2025/new-study-reveals-that-ai-cannot-fully-write-like-a-human.html

- “How student writing has evolved in the AI era” (Phys.org, 2025). https://phys.org/news/2025-12-student-evolved-ai-era.html

- “Accelerating AI Relentless Change: 2026 Predictions” (Jakob Nielsen, LinkedIn, 2026). https://www.linkedin.com/posts/jakobnielsenphd_my-main-prediction-for-2026-…

- “Human researchers are superior to large language models in writing…” (Scientific Reports, 2025). https://www.nature.com/articles/s41598-025-28993-5

###

Filed under: Uncategorized |

Leave a comment