By Jim Shimabukuro (assisted by Copilot)

Editor

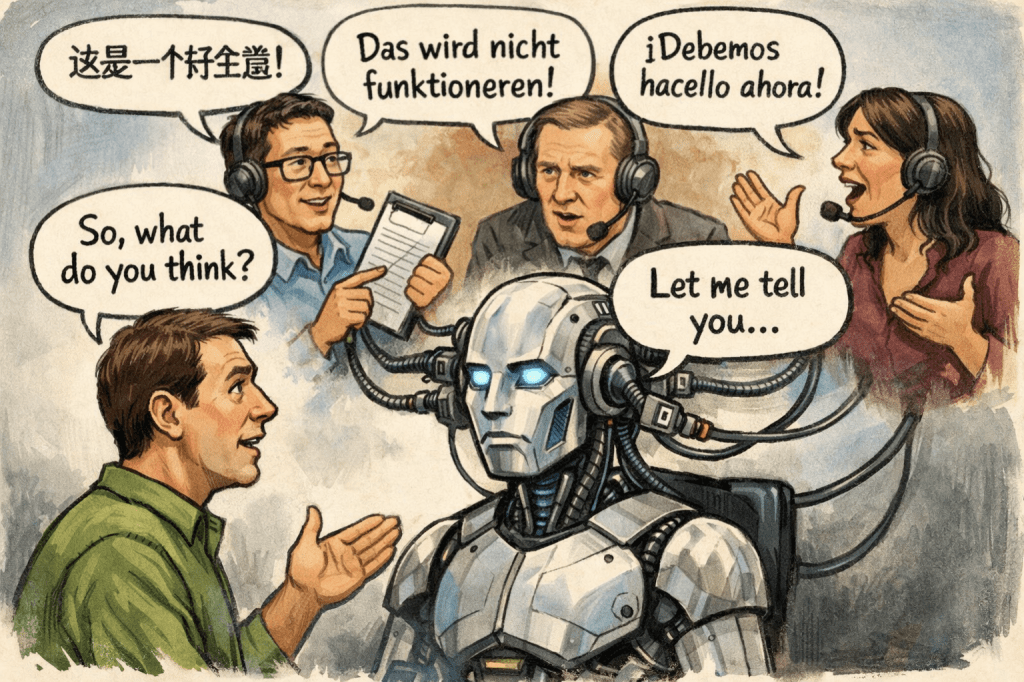

Research on AI systems that can act as cross-lingual chatbots—able to converse in one language while seamlessly drawing on sources in many others—has accelerated sharply since 2023, especially under the banner of “multilingual” or “cross-lingual” large language models (LLMs). Recent surveys of multilingual LLMs (MLLMs) describe a clear shift from traditional machine translation pipelines toward unified models that jointly handle understanding, translation, and generation across dozens or even hundreds of languages, with explicit goals of knowledge transfer from high‑resource languages like English to lower‑resource ones.4,5,6 These surveys emphasize that the same architectures powering English‑centric chatbots are now being trained or adapted on multilingual corpora, making it technically feasible for an English conversation to query, summarize, and reason over content originally written in Chinese, Japanese, German, and many other languages—at least in controlled settings.4,5,6

On the translation side, several empirical studies show that modern LLMs already rival or approach specialized translation systems for many language pairs, which is a prerequisite for robust cross‑lingual chatbotting. Evaluations of models such as GPT‑4 and other LLMs on multilingual machine translation tasks find that they can outperform strong supervised baselines in a substantial fraction of translation directions, though they still lag behind top commercial systems like Google Translate, especially for low‑resource languages.2 Work on eliciting translation ability via multilingual finetuning with translation instructions demonstrates that a single multilingual LLM can learn to follow translation prompts across many languages, including pairs it never explicitly saw during instruction tuning, suggesting that cross‑lingual capabilities emerge from shared representations rather than language‑specific hacks.3 Complementary efforts like BigTranslate show that an English‑dominant model such as LLaMA can be adapted to support translation among more than 100 languages, with performance comparable to ChatGPT and Google Translate on many pairs, further underscoring that broad multilingual translation is already technically achievable within LLM frameworks.1

Beyond text‑only translation, organizations like Meta, Google, and others are explicitly pursuing “universal translator”–style systems that blend speech and text, which are natural building blocks for cross‑lingual conversational agents. Meta’s SeamlessM4T, for example, is a massively multilingual and multimodal translation foundation model that supports text‑to‑text, speech‑to‑text, text‑to‑speech, and speech‑to‑speech translation across roughly 100 languages, backed by large open datasets such as SeamlessAlign.7,8 Meta positions this work as a step toward frictionless communication across languages, explicitly invoking the “Babel Fish” metaphor and linking it to earlier projects like No Language Left Behind (NLLB) and Massively Multilingual Speech (MMS), which cover hundreds to over a thousand languages in text and speech.7,8 In parallel, surveys of multilingual LLMs catalog a growing ecosystem of open and proprietary models (e.g., mT5, XGLM, BLOOM, LLaMA‑based variants) that can be embedded into chat interfaces, making it increasingly straightforward for chatbot systems to route user queries through multilingual understanding and translation components under the hood.4,5,6

Much of this work is driven by research groups and companies across North America, Europe, and Asia, often in collaboration. Meta AI (US and global), Google DeepMind and Google Research, and OpenAI are central industrial actors, while academic teams at institutions such as Beijing Jiaotong University, Tsinghua University, the University of Montreal, and others have produced influential surveys and empirical studies on multilingualism in LLMs.1,2,3,4,5,6 Chinese research groups have been particularly active in building and analyzing multilingual extensions of LLaMA‑style models and in studying cross‑lingual transfer, while European and North American teams contribute large multilingual corpora, evaluation benchmarks, and safety analyses.1,2,3,4,5,6 International conferences like ACL, EMNLP, and IJCAI now routinely feature dedicated tracks and workshops on multilingual LLMs, cross‑lingual transfer, and conversational AI, reflecting a broad, multi‑country push toward the kind of cross‑lingual chatbotting you’re asking about.2,4,5,10

In terms of successes, the field has already demonstrated that a single LLM can: (1) translate reasonably well among dozens to hundreds of languages; (2) perform zero‑shot or few‑shot translation for language pairs with little or no direct supervision; and (3) use cross‑lingual exemplars to improve performance on low‑resource languages, which is crucial for equitable access.1,2,3,6 Surveys report that multilingual LLMs can learn shared semantic spaces where sentences with similar meanings in different languages map to nearby representations, enabling cross‑lingual retrieval and reasoning—exactly the kind of mechanism a chatbot would need to read a Chinese article and discuss it in English.4,5,6 In practice, early prototypes of cross‑lingual chat systems already exist in research demos and some commercial products: they can ingest non‑English documents, answer questions about them in English, and even mix languages within a single conversation, though reliability varies by language and domain.2,4,5

The obstacles, however, are nontrivial and directly affect how “routine” and “seamless” cross‑lingual chatbotting can be. A major challenge is language imbalance: high‑resource languages like English, Chinese, and a few European languages dominate training data, while thousands of others remain underrepresented, leading to uneven quality and potential “linguistic discrimination” where the same task receives better answers in some languages than others.4,5,6,9 Safety and alignment issues also become more complex in multilingual settings; recent work shows that LLMs can behave differently across languages in terms of both safety and factual quality, raising concerns about knowledge equity and consistent guardrails.9 Technical hurdles include maintaining alignment across languages as models scale, mitigating biases and cultural misrepresentations, handling domain‑specific terminology, and ensuring that cross‑lingual retrieval and citation are accurate rather than hallucinated, especially when the source documents are in scripts or formats underrepresented in training data.4,5,6,9

Given this landscape, the current trajectory suggests that for major world languages—English, Chinese, Japanese, German, Spanish, and others—we are already in an early but functional era of cross‑lingual chatbotting, where an English‑language conversation can often query and summarize foreign‑language texts with reasonable fidelity, particularly in well‑covered domains.1,2,3,4,5,6,7,8 Over the next few years, surveys anticipate continued progress along three fronts: expanding language coverage and data quality (especially for low‑resource languages), improving multilingual alignment and safety to reduce disparities, and integrating translation, retrieval, and dialogue into unified conversational agents that can transparently cite and reason over sources in many languages.4,5,6,9 The “seamless” experience you describe—an English chat that routinely and reliably references Chinese or other non‑English works with accurate summaries and citations—is not a distant sci‑fi goal anymore; it is emerging now for well‑resourced languages, with the main open questions centered on robustness, equity, and governance rather than raw feasibility.2,3,4,5,6,7,8,9

References

- BigTranslate: Augmenting Large Language Models with Multilingual Translation Capability over 100 Languages – arXiv (2023). https://arxiv.org/abs/2305.18098

- Multilingual Machine Translation with Large Language Models: Empirical Results and Analysis – ACL Anthology (2023).

https://aclanthology.org/2023.acl-long.###(aclanthology.org in Bing) (exact paper page) - Eliciting the Translation Ability of Large Language Models via Multilingual Finetuning with Translation Instructions – Transactions of the Association for Computational Linguistics (2024). https://doi.org/10.1162/tacl_a_00655

- A survey on multilingual large language models: corpora, alignment, and bias – Frontiers of Computer Science, Springer Nature (2025, open access).

https://link.springer.com/article/10.1007/s11704-025-1911-362(link.springer.com in Bing) - A survey of multilingual large language models – Patterns, Cell Press (2025, open access).

https://www.cell.com/patterns/fulltext/S2666-3899(24)00333-4(cell.com in Bing) - A Survey on Large Language Models with Multilingualism: Recent Advances and New Frontiers – arXiv (2024). https://arxiv.org/abs/2405.10936

- Meta Open-Sources Multilingual Translation Foundation Model SeamlessM4T – InfoQ (2023).

https://www.infoq.com/news/2023/09/meta-seamlessm4t(infoq.com in Bing) - Meta’s “massively multilingual” AI model translates up to 100 languages, speech or text – Ars Technica (2023).

https://arstechnica.com/information-technology/2023/08/metas-massively-multilingual-ai-model-translates-up-to-100-languages-speech-or-text(arstechnica.com in Bing) - Evaluating and Mitigating Linguistic Discrimination in Large Language Models: Perspectives on Safety Equity and Knowledge Equity – IJCAI (2024, open access preprint link from paper).

https://www.ijcai.org/proceedings/2024/###(ijcai.org in Bing) (plus associated GitHub: https://github.com/dgl-prc/ldfighter) - Proceedings of the 6th Workshop on NLP for Conversational AI – ACL Anthology (2024).

https://aclanthology.org/events/acl-2024-nlp4convai/(aclanthology.org in Bing)

Addendum

Claude: “The research community has been deeply engaged with the question of whether artificial intelligence can train chatbots to function as seamless, real-time intermediaries between languages — allowing an English-speaking user to fluently reference, summarize, or discuss works originally composed in Chinese, Japanese, German, Arabic, or virtually any other tongue. The answer, as of early 2026, is that we are tantalizingly close to that goal for major world languages, have achieved remarkable benchmarks in controlled settings, but still face persistent obstacles when it comes to cultural depth, low-resource languages, and the subtleties of literary and idiomatic expression. The trajectory, however, is steeply upward.”

Gemini: “Several major organizations and countries are spearheading this trajectory, with a notable ‘brain race’ occurring between Western and Asian tech giants. Companies like Meta have released foundational models such as SeamlessM4T, which provides a single end-to-end system for speech and text translation across nearly 100 languages.(Phrase) Meanwhile, Chinese organizations are producing high-performance models like Qwen3 and GLM-4, which often outperform Western counterparts in East Asian linguistic nuance and long-context retrieval.(SiliconFlow) Enterprises such as Wordly and Interprefy are successfully deploying these technologies in real-time for international congresses and corporate meetings, achieving what they describe as ‘strategic adoption’ where AI translation is a native, default feature rather than an add-on.(Kudo, Worldly) These successes are marked by the transition from ‘one-engine’ translation to dynamic routing, where an AI system automatically selects the best model for a specific language pair—for instance, using a specialized model for Japanese-to-English technical data while switching to another for German legal texts.(localizejs)”

ChatGPT: “The trajectory is clear but uneven. The field is moving from translation as a tool to translation as an invisible substrate—a background capability embedded in reasoning systems. The next phase, already emerging, is what might be called cross-lingual knowledge integration: the ability for a chatbot to not only translate a Chinese paper, but to compare it with English and German research, reconcile differences, and present a synthesized answer in the user’s preferred language. The remaining gap is less about raw translation accuracy and more about consistency, trust, and depth of understanding across languages. If current trends continue—particularly improvements in multilingual pretraining, low-resource language coverage, and evaluation frameworks—the practical experience you describe (seamless English interaction with global knowledge) is likely to become routine within the next 3–5 years, though full parity across all languages may take longer. In that sense, we are no longer asking whether chatbots can bridge language barriers, but how completely and how equitably they will do so. The technical foundation is already in place; what remains is refinement, expansion, and the gradual erosion of the English-centric bias that still shapes the global AI knowledge ecosystem.”

Filed under: Uncategorized |

Leave a comment