By Jim Shimabukuro (assisted by Grok)

Editor

[Related: Jan 2026, Dec 2025, Nov 2025, Oct 2025, Sep 2025, Aug 2025]

1. The “Periodic Table” for Multimodal AI Frameworks

A novel mathematical framework that unifies and classifies methods for building multimodal AI systems—those that integrate text, images, audio, and video—emerged as a quiet but significant advancement in early March 2026. Physicists at Emory University developed the Variational Multivariate Information Bottleneck Framework, which reveals that many leading AI techniques share a core principle: compressing input data while preserving only the most predictive elements for a given task. This acts like a control knob, allowing developers to dial in exactly which information to retain or discard when designing loss functions during model training. The framework organizes disparate AI approaches into a structured “periodic table,” where each “cell” corresponds to how a method balances compression against reconstruction accuracy. Researchers tested it on benchmark datasets and retroactively applied it to dozens of existing techniques, demonstrating its ability to predict which algorithms will succeed for specific problems, estimate required training data volumes, and even propose entirely new methods.1

The development began to gain traction on March 4, 2026, when Emory University publicized the work through ScienceDaily, bringing renewed attention to research originally published in a specialized journal the previous year. Lead contributors included Eslam Abdelaleem (then a recent Emory physics PhD, now a postdoctoral fellow at Georgia Tech), senior author Ilya Nemenman (Emory professor of physics), and co-author K. Michael Martini (former Emory postdoctoral researcher). All work occurred at Emory University in Atlanta, Georgia, with a physics-inspired lens that prioritizes fundamental principles over empirical trial-and-error. As Nemenman explained, many successful AI methods “boil down to a single, simple idea—compress multiple kinds of data just enough to keep the pieces that truly predict what you need.”1

This matters because multimodal AI has long relied on ad-hoc experimentation, leading to bloated models that demand excessive data and compute resources while risking the inclusion of irrelevant or biased features. By providing a principled way to tailor models, the framework promises more accurate, efficient, and trustworthy systems that require less energy and data—reducing environmental impact and enabling frontier experiments in data-scarce domains like biology or personalized medicine. Abdelaleem noted the goal is to “design AI models that are tailored to the problem… while also allowing them to understand how and why each part of the model is working.” In an era of proliferating multimodal applications, this under-the-radar organizational tool could accelerate innovation without the usual computational waste, quietly reshaping how AI engineers approach complex, real-world data integration.1

2. Meta’s TRIBE v2 Brain Predictive Foundation Model

Meta AI unveiled TRIBE v2 on March 26, 2026, a groundbreaking predictive foundation model designed as a digital twin of human neural activity, capable of forecasting brain responses to complex stimuli including sights, sounds, and language. Trained on an extensive dataset of over 700 healthy volunteers exposed to images, podcasts, videos, and text, the model achieves a 70-fold increase in resolution compared to prior efforts by leveraging low-resolution fMRI (functional magnetic resonance imaging) data from just four individuals in earlier iterations. It excels at zero-shot generalization, predicting brain activity for entirely new subjects, languages, or tasks it has never encountered during training. The company released the full model weights on Hugging Face, open-sourced the codebase on GitHub, published an accompanying research paper, and provided an interactive demo—all under a CC BY-NC license—inviting the global research community to build upon it.2

Traction for TRIBE v2 began immediately upon its March 26 announcement, as the open release positioned it for rapid adoption in both neuroscience labs and AI development teams. The work was led by Meta AI’s research teams, headquartered in the United States with contributions from international collaborators in brain-imaging studies. By simulating high-resolution neural patterns in silico, TRIBE v2 enables scientists to test hypotheses about brain processing without recruiting human participants, dramatically accelerating discovery cycles for neurological disorders that affect hundreds of millions worldwide. It also feeds insights back into AI design, allowing engineers to incorporate biologically plausible mechanisms that could yield more robust, efficient, and human-aligned systems.

Its importance lies at the crossroads of computational neuroscience and practical AI advancement. Traditional brain-modeling approaches have been limited by small sample sizes and low resolution, but TRIBE v2 overcomes these barriers to create a scalable “in silico neuroscience” platform. This could transform treatment pathways for conditions like Alzheimer’s or epilepsy by enabling rapid virtual experimentation, while simultaneously guiding the next generation of AI architectures toward greater biological fidelity. In a field often dominated by headline-grabbing chatbots and generative tools, TRIBE v2 represents a quieter yet profound step toward closing the loop between artificial and biological intelligence.

3. Hexagon AB’s Apollo AI for Predictive Metrology Monitoring

In March 2026, Hexagon AB launched Apollo, an AI-powered predictive condition monitoring system specifically engineered for metrology equipment used in precision manufacturing and quality inspection. The tool analyzes machine data in real time to detect subtle patterns signaling wear, calibration drift, or impending failure long before they disrupt production lines. Rather than relying on scheduled maintenance or reactive repairs, Apollo shifts metrology operations to a proactive paradigm, using advanced pattern recognition to forecast equipment health with high accuracy. Hexagon, a global leader in digital reality solutions headquartered in Sweden with operations worldwide, integrated the system into its existing metrology portfolio to address a longstanding pain point: when inspection tools go offline, entire manufacturing validation processes halt, creating costly bottlenecks.3

The development gained traction throughout March 2026 as Hexagon rolled out the solution amid growing industry emphasis on AI-driven reliability in high-stakes production environments. Deployment is occurring across manufacturing facilities globally, particularly in sectors requiring micron-level precision such as automotive, aerospace, and electronics. By targeting metrology—the often-overlooked backbone of quality assurance—Apollo delivers outsized returns: preventing unplanned downtime in inspection equipment translates directly into sustained production throughput and measurement accuracy.

This innovation matters because manufacturing has lagged in AI adoption for core operational hardware despite hype around robotics and automation. Apollo demonstrates how targeted AI can deliver immediate, measurable ROI in niche but critical domains, reducing waste, lowering maintenance costs, and enhancing overall equipment effectiveness. As factories increasingly operate under just-in-time pressures and sustainability mandates, predictive tools like this quietly elevate reliability standards without requiring wholesale factory overhauls. In an under-the-radar shift from experimental pilots to production deployment, Hexagon’s Apollo underscores AI’s maturing role as a practical guardian of industrial precision rather than a flashy disruptor.

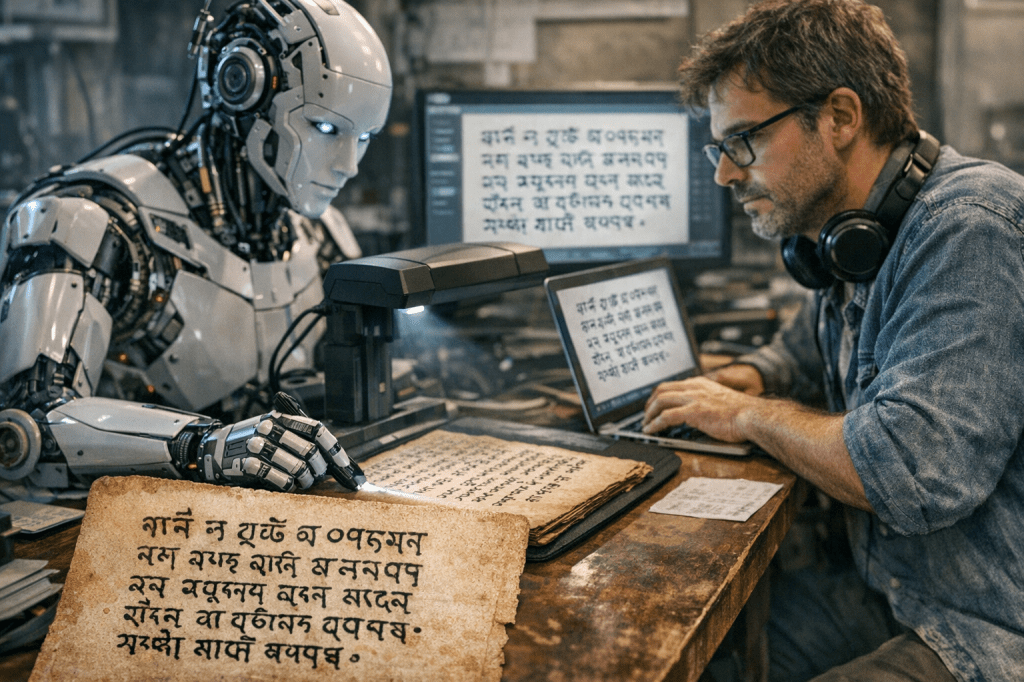

4. LORYA: AI Tool for Digitizing Written Cultural Heritage

On April 1, 2026, the United Nations Development Programme (UNDP) in partnership with Serbia’s Mathematical Institute of the Serbian Academy of Sciences and Arts (MI SANU) and the National Library of Serbia officially launched LORYA, an open-source AI platform engineered to transform challenging written cultural heritage into clean, machine-readable text. The tool excels at processing digitized documents that defeat conventional optical character recognition (OCR)—including multilingual scripts, handwritten manuscripts, non-standard layouts, and historical texts—by applying advanced AI data-processing techniques to extract accurate, structured content. Registered as a Digital Public Good and released under an open-source license, LORYA will be freely adaptable worldwide, with early interest already expressed by UNDP teams in Iraq and Nepal for localization.4

Traction began on launch day in Belgrade, Serbia, where the project’s international backers from the governments of France and Japan highlighted its potential. Development occurred in Serbia through close collaboration among UNDP, academic institutions, and the national library, supported by targeted funding from France and Japan. The platform’s immediate value lies in feeding high-quality textual data from underrepresented languages into AI training pipelines, enabling more inclusive language models while preserving and democratizing access to historical archives for researchers, educators, and the public.

LORYA matters profoundly because the AI era risks widening the digital divide for non-dominant languages and cultures; vast troves of written heritage remain locked in inaccessible formats, invisible to modern models. By bridging this gap, the tool safeguards linguistic diversity, combats disinformation through better contextual data, and promotes human rights by ensuring cultural voices shape future AI systems. UNDP Serbia Resident Representative Yakup Beris captured its significance: initiatives like LORYA ensure “written heritage [becomes] visible and recognizable in the era of artificial intelligence,” fostering inclusive AI that respects global identities. In a landscape dominated by large-scale commercial models, this Serbia-originated solution quietly advances equitable AI development and cultural preservation on a global scale.4

5. AI-Enhanced ECG Interpretation for Occlusive Myocardial Infarction

A prospective clinical study presented on March 20, 2026, at the European Society of Cardiology’s Acute CardioVascular Care (ACVC) Congress in Lisbon demonstrated that AI-based ECG interpretation significantly outperforms conventional diagnostic pathways in detecting occlusive myocardial infarction (OMI) among patients lacking classic ST-elevation signs. Researchers, including Federico Nani and Matthias Unterhuber from Bolzano Hospital in Italy, evaluated the AI system (noted in related coverage as Queen of Hearts AI-enabled technology) on 1,490 suspected non-ST-elevation acute coronary syndrome cases. The AI correctly identified occlusive MI in 84% of confirmed instances, achieving 77% sensitivity, 99% specificity, and 98% negative predictive value—compared to just 42% correct identification by standard human ECG interpretation. The tool rapidly flags or rules out time-critical blockages, enabling faster triage and intervention.5

The findings gained traction immediately upon presentation at the March 20 congress, spotlighting AI’s potential in emergency cardiology where diagnostic uncertainty can delay life-saving treatment. The research originated from Bolzano Hospital in Italy, with results shared internationally through the ESC platform. By augmenting rather than replacing clinicians, the AI complements existing workflows in hospitals worldwide, particularly in settings handling high volumes of ambiguous chest-pain cases.

This development matters because occlusive MIs (Myocardial Infarctions) without ST (ST-segment: a specific section on an ECG or EKG) elevation remain notoriously difficult to diagnose promptly, contributing to higher mortality and complications when treatment is delayed. The AI’s superior accuracy promises to reduce missed diagnoses, accelerate reperfusion therapy, and ultimately save lives while easing pressure on overburdened cardiac teams. As Doctor Nani concluded, “This simple, accessible AI-based approach demonstrated superior accuracy in identifying and excluding occlusive MI compared with conventional diagnostic pathways.” In the broader push toward AI in healthcare, this application stands out as a practical, evidence-based tool that addresses a specific, high-stakes clinical gap—trending among cardiologists yet remaining largely below the general public’s radar.5

References

- Scientists build a “periodic table” for AI (4 March 2026) – https://www.sciencedaily.com/releases/2026/03/260303145714.htm

- Introducing TRIBE v2: A Predictive Foundation Model Trained to Understand How the Human Brain Processes Complex Stimuli (26 March 2026) – https://ai.meta.com/blog/tribe-v2-brain-predictive-foundation-model/

- AI in Manufacturing March 2026: Hexagon, ABB and Lantek Reveal Key Industry Shifts (27 March 2026) – https://machinetoolnews.ai/ai-in-manufacturing-march-2026/

- LORYA Launched – AI Tool for Supporting the Digitization and Preservation of Written Cultural Heritage (1 April 2026) – https://www.undp.org/serbia/news/lorya-launched-ai-tool-supporting-digitization-and-preservation-written-cultural-heritage

- AI outperforms conventional diagnosis for certain types of heart attacks (20 March 2026) – https://www.escardio.org/news/press/press-releases/acvc-press/

Filed under: Uncategorized |

Leave a comment