By Jim Shimabukuro (assisted by ChatGPT)

Editor

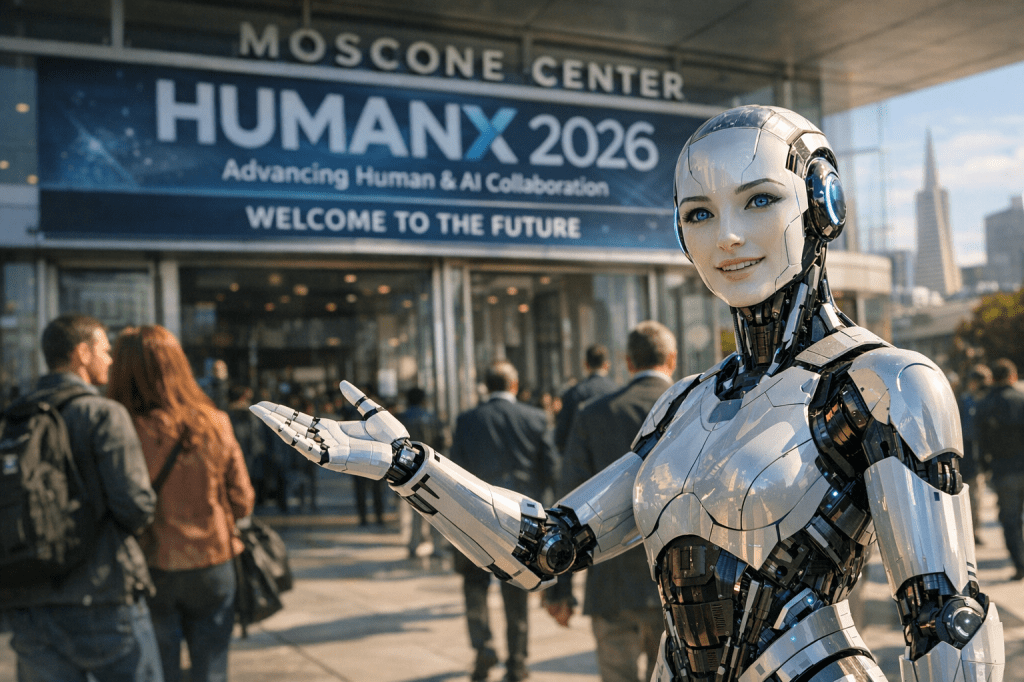

The April 6–9, 2026 HumanX conference at Moscone Center in San Francisco can be read not simply as a gathering of prominent technologists, but as a signal event in the consolidation of an AI-era worldview. Taken together, the remarks of speakers such as Fei-Fei Li, Matt Garman, Andrew Ng, Bret Taylor, Ali Ghodsi, Sarah Guo, Sridhar Ramaswamy, and Al Gore reveal a coherent narrative: AI in 2026 is no longer emerging—it is structuring the next phase of economic, institutional, and human development.

At the highest level, the conference reflects a decisive transition from AI as breakthrough to AI as baseline infrastructure. Matt Garman’s emphasis on enterprise-scale deployment captures this shift most directly. Organizations are no longer experimenting at the margins; they are embedding AI into core operations—customer service, logistics, software development, and decision-making pipelines.2 This is reinforced by Sridhar Ramaswamy’s notion of “continuous intelligence,” where real-time data flows merge with AI systems to produce constant, automated insight loops.4 What emerges is a picture of firms evolving into always-on cognitive systems, where the boundary between analysis and action is increasingly compressed.

Yet this infrastructural turn depends on a quieter but more consequential foundation: data supremacy. Ali Ghodsi’s argument that proprietary, well-governed data will outweigh model sophistication reframes the competitive landscape.1 In earlier phases of the AI boom, attention centered on model scale—parameter counts, benchmark scores, and breakthrough architectures. By 2026, the HumanX discourse suggests a maturation: models are becoming commoditized, but high-quality, domain-specific data remains scarce and defensible. This insight aligns with the strategic positioning of companies like Databricks and Snowflake, whose platforms aim to unify data engineering, analytics, and machine learning into a single ecosystem.

At the same time, Andrew Ng’s intervention grounds the conversation in operational realism. While frontier models dominate headlines, Ng reiterates that most value creation comes from targeted applications—fraud detection systems, predictive maintenance, workflow automation—rather than speculative leaps toward artificial general intelligence.3 His message functions as a corrective to both hype and paralysis: organizations need not wait for perfect AI; they can deploy effective AI now. In doing so, Ng implicitly democratizes the field, suggesting that AI advantage is less about access to the most advanced models and more about the discipline of implementation.

Running parallel to this pragmatic current is a more disruptive force identified by Sarah Guo: the rise of AI-native companies.1 These firms are not adapting legacy processes; they are designing themselves around AI from inception. The historical analogy is to cloud-native startups of the 2010s, but the implications may be even more far-reaching. AI-native organizations can operate with radically lower headcounts, faster iteration cycles, and entirely new product categories. The HumanX synthesis suggests that incumbents face a dual challenge: not only must they adopt AI, but they must do so while competing against entities unburdened by pre-AI architectures. In this sense, AI is not merely a tool of optimization—it is a driver of creative destruction.

As capabilities expand and adoption accelerates, the question of governance becomes unavoidable. Bret Taylor’s focus on adaptive oversight highlights a growing tension: traditional regulatory systems move slowly, while AI evolves at exponential speed.3 The implication is that governance itself must become more dynamic—potentially incorporating real-time monitoring, iterative rulemaking, and closer collaboration between industry and public institutions. This is not simply a policy challenge; it is a structural one. The systems being governed are increasingly autonomous, probabilistic, and opaque, raising fundamental questions about accountability and control.

It is here that Fei-Fei Li’s human-centered framework provides a normative anchor.2 If Garman and Ramaswamy describe what AI is becoming, and Ghodsi and Guo describe how it will compete, Li addresses what it should be for. Her emphasis on interdisciplinary design and ethical alignment suggests that the next phase of AI development will require input not only from engineers, but from social scientists, educators, healthcare professionals, and communities themselves. The HumanX narrative thus expands beyond technology into civilizational design: how to ensure that increasingly powerful systems remain aligned with human values and social stability.

Al Gore’s intervention extends this ethical horizon to the planetary scale.2 By positioning AI as a tool for climate mitigation—optimizing energy grids, enhancing environmental modeling, and accelerating sustainable innovation—he reframes AI not as an isolated technological revolution, but as a meta-technology capable of amplifying humanity’s response to existential challenges. This linkage between AI and climate underscores a broader theme at HumanX: the stakes of AI are no longer confined to industry disruption; they encompass the future of global systems.

Taken together, these perspectives converge on a central insight: AI in 2026 is entering its integrative phase. The early years of discovery and experimentation are giving way to a period of consolidation, where AI is woven into the fabric of organizations, economies, and societies. Three structural shifts define this phase. First, AI is becoming infrastructural—embedded, ubiquitous, and largely invisible. Second, competitive advantage is migrating from algorithms to data and implementation. Third, the scope of impact is expanding from economic productivity to governance, ethics, and planetary sustainability.

What distinguishes this moment is not any single breakthrough, but the synchronization of multiple transformations. Enterprises are scaling AI simultaneously as startups are reinventing themselves around it. Governance frameworks are struggling to keep pace even as ethical expectations intensify. Technical capabilities are advancing even as practical deployment becomes the primary differentiator. The HumanX conference, in this sense, serves as a microcosm of a broader transition: from the question of what AI can do to the more consequential question of how AI will shape the structures within which we live.

The trajectory implied by these speakers points toward a near future—by 2027 and beyond—in which AI is not a distinct sector but a universal layer, akin to electricity or the internet. Organizations will increasingly be judged by their ability to integrate AI into decision-making, individuals by their ability to collaborate with AI systems, and societies by their ability to govern AI responsibly. The challenge, as articulated across HumanX 2026, is to navigate this transformation in a way that preserves human agency while unlocking unprecedented capability.

References

- “HumanX 2026 Speakers” — https://www.humanx.co/speakers

- “Here’s What You Can Expect at HumanX 2026 AI Conference” — https://mymodernmet.com/humanx-2026-ai-conference/

- “HumanX Announces First 50 World-Class Speakers for 2026 AI Event” — https://www.businesswire.com/news/home/20250616593456/en/HumanX-Announces-First-50-World-Class-Speakers-for-2026-AI-Event

- “HumanX 2026 Speaker List (AIML Events)” — https://aiml.events/events/humanx-2026

###

Filed under: Uncategorized |

Leave a comment